satanicmangoes/final-result

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +1 -0

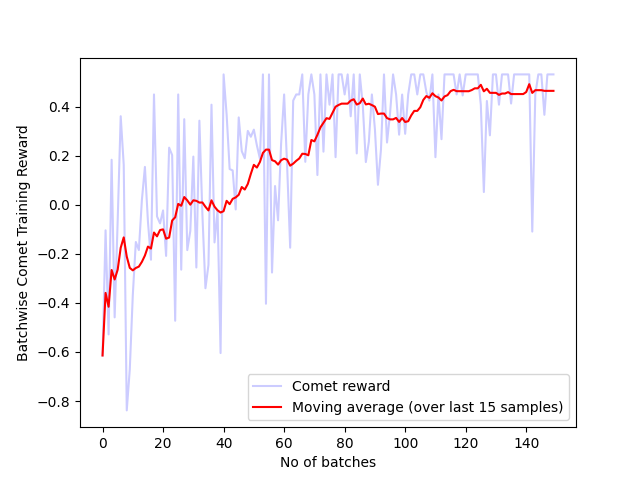

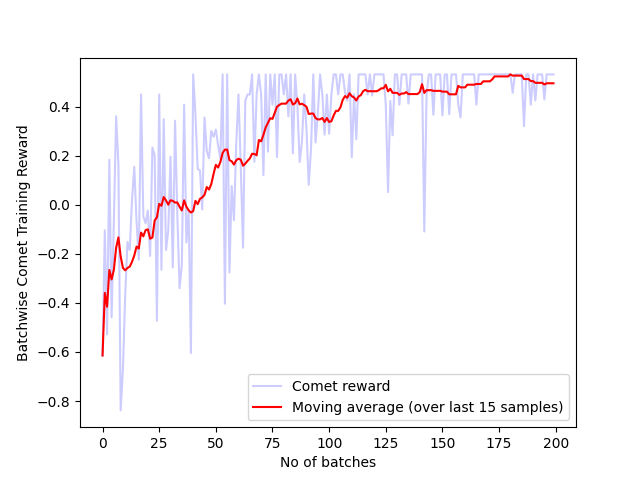

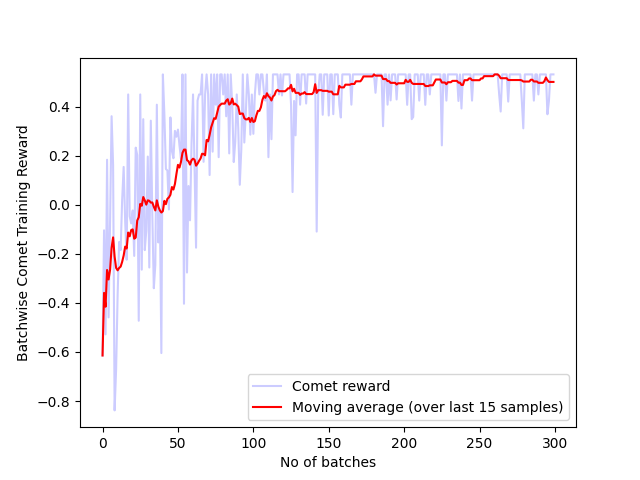

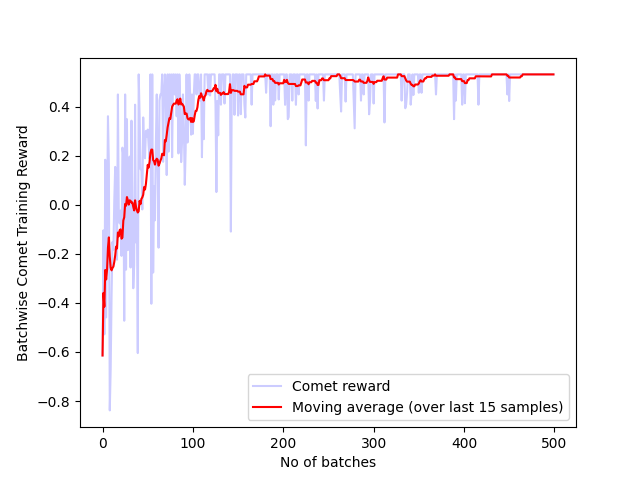

- Batch_comet_reward_100_t5.png +0 -0

- Batch_comet_reward_125_t5.png +0 -0

- Batch_comet_reward_150_t5.png +0 -0

- Batch_comet_reward_175_t5.png +0 -0

- Batch_comet_reward_200_t5.png +0 -0

- Batch_comet_reward_225_t5.png +0 -0

- Batch_comet_reward_250_t5.png +0 -0

- Batch_comet_reward_25_t5.png +0 -0

- Batch_comet_reward_275_t5.png +0 -0

- Batch_comet_reward_300_t5.png +0 -0

- Batch_comet_reward_325_t5.png +0 -0

- Batch_comet_reward_350_t5.png +0 -0

- Batch_comet_reward_375_t5.png +0 -0

- Batch_comet_reward_400_t5.png +0 -0

- Batch_comet_reward_425_t5.png +0 -0

- Batch_comet_reward_450_t5.png +0 -0

- Batch_comet_reward_475_t5.png +0 -0

- Batch_comet_reward_500_t5.png +0 -0

- Batch_comet_reward_50_t5.png +0 -0

- Batch_comet_reward_525_t5.png +0 -0

- Batch_comet_reward_550_t5.png +0 -0

- Batch_comet_reward_575_t5.png +0 -0

- Batch_comet_reward_600_t5.png +0 -0

- Batch_comet_reward_75_t5.png +0 -0

- Batch_pronoun_reward_100_t5.png +0 -0

- Batch_pronoun_reward_125_t5.png +0 -0

- Batch_pronoun_reward_150_t5.png +0 -0

- Batch_pronoun_reward_175_t5.png +0 -0

- Batch_pronoun_reward_200_t5.png +0 -0

- Batch_pronoun_reward_225_t5.png +0 -0

- Batch_pronoun_reward_250_t5.png +0 -0

- Batch_pronoun_reward_25_t5.png +0 -0

- Batch_pronoun_reward_275_t5.png +0 -0

- Batch_pronoun_reward_300_t5.png +0 -0

- Batch_pronoun_reward_325_t5.png +0 -0

- Batch_pronoun_reward_350_t5.png +0 -0

- Batch_pronoun_reward_375_t5.png +0 -0

- Batch_pronoun_reward_400_t5.png +0 -0

- Batch_pronoun_reward_425_t5.png +0 -0

- Batch_pronoun_reward_450_t5.png +0 -0

- Batch_pronoun_reward_475_t5.png +0 -0

- Batch_pronoun_reward_500_t5.png +0 -0

- Batch_pronoun_reward_50_t5.png +0 -0

- Batch_pronoun_reward_525_t5.png +0 -0

- Batch_pronoun_reward_550_t5.png +0 -0

- Batch_pronoun_reward_575_t5.png +0 -0

- Batch_pronoun_reward_600_t5.png +0 -0

- Batch_pronoun_reward_75_t5.png +0 -0

- README.md +52 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

Batch_comet_reward_100_t5.png

ADDED

|

Batch_comet_reward_125_t5.png

ADDED

|

Batch_comet_reward_150_t5.png

ADDED

|

Batch_comet_reward_175_t5.png

ADDED

|

Batch_comet_reward_200_t5.png

ADDED

|

Batch_comet_reward_225_t5.png

ADDED

|

Batch_comet_reward_250_t5.png

ADDED

|

Batch_comet_reward_25_t5.png

ADDED

|

Batch_comet_reward_275_t5.png

ADDED

|

Batch_comet_reward_300_t5.png

ADDED

|

Batch_comet_reward_325_t5.png

ADDED

|

Batch_comet_reward_350_t5.png

ADDED

|

Batch_comet_reward_375_t5.png

ADDED

|

Batch_comet_reward_400_t5.png

ADDED

|

Batch_comet_reward_425_t5.png

ADDED

|

Batch_comet_reward_450_t5.png

ADDED

|

Batch_comet_reward_475_t5.png

ADDED

|

Batch_comet_reward_500_t5.png

ADDED

|

Batch_comet_reward_50_t5.png

ADDED

|

Batch_comet_reward_525_t5.png

ADDED

|

Batch_comet_reward_550_t5.png

ADDED

|

Batch_comet_reward_575_t5.png

ADDED

|

Batch_comet_reward_600_t5.png

ADDED

|

Batch_comet_reward_75_t5.png

ADDED

|

Batch_pronoun_reward_100_t5.png

ADDED

|

Batch_pronoun_reward_125_t5.png

ADDED

|

Batch_pronoun_reward_150_t5.png

ADDED

|

Batch_pronoun_reward_175_t5.png

ADDED

|

Batch_pronoun_reward_200_t5.png

ADDED

|

Batch_pronoun_reward_225_t5.png

ADDED

|

Batch_pronoun_reward_250_t5.png

ADDED

|

Batch_pronoun_reward_25_t5.png

ADDED

|

Batch_pronoun_reward_275_t5.png

ADDED

|

Batch_pronoun_reward_300_t5.png

ADDED

|

Batch_pronoun_reward_325_t5.png

ADDED

|

Batch_pronoun_reward_350_t5.png

ADDED

|

Batch_pronoun_reward_375_t5.png

ADDED

|

Batch_pronoun_reward_400_t5.png

ADDED

|

Batch_pronoun_reward_425_t5.png

ADDED

|

Batch_pronoun_reward_450_t5.png

ADDED

|

Batch_pronoun_reward_475_t5.png

ADDED

|

Batch_pronoun_reward_500_t5.png

ADDED

|

Batch_pronoun_reward_50_t5.png

ADDED

|

Batch_pronoun_reward_525_t5.png

ADDED

|

Batch_pronoun_reward_550_t5.png

ADDED

|

Batch_pronoun_reward_575_t5.png

ADDED

|

Batch_pronoun_reward_600_t5.png

ADDED

|

Batch_pronoun_reward_75_t5.png

ADDED

|

README.md

ADDED

|

@@ -0,0 +1,52 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: cc-by-nc-4.0

|

| 3 |

+

base_model: facebook/nllb-200-distilled-600M

|

| 4 |

+

tags:

|

| 5 |

+

- trl

|

| 6 |

+

- iterative-sft

|

| 7 |

+

- generated_from_trainer

|

| 8 |

+

model-index:

|

| 9 |

+

- name: working

|

| 10 |

+

results: []

|

| 11 |

+

---

|

| 12 |

+

|

| 13 |

+

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

|

| 14 |

+

should probably proofread and complete it, then remove this comment. -->

|

| 15 |

+

|

| 16 |

+

# working

|

| 17 |

+

|

| 18 |

+

This model is a fine-tuned version of [facebook/nllb-200-distilled-600M](https://huggingface.co/facebook/nllb-200-distilled-600M) on an unknown dataset.

|

| 19 |

+

|

| 20 |

+

## Model description

|

| 21 |

+

|

| 22 |

+

More information needed

|

| 23 |

+

|

| 24 |

+

## Intended uses & limitations

|

| 25 |

+

|

| 26 |

+

More information needed

|

| 27 |

+

|

| 28 |

+

## Training and evaluation data

|

| 29 |

+

|

| 30 |

+

More information needed

|

| 31 |

+

|

| 32 |

+

## Training procedure

|

| 33 |

+

|

| 34 |

+

### Training hyperparameters

|

| 35 |

+

|

| 36 |

+

The following hyperparameters were used during training:

|

| 37 |

+

- learning_rate: 6e-06

|

| 38 |

+

- train_batch_size: 4

|

| 39 |

+

- eval_batch_size: 8

|

| 40 |

+

- seed: 42

|

| 41 |

+

- gradient_accumulation_steps: 16

|

| 42 |

+

- total_train_batch_size: 64

|

| 43 |

+

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

|

| 44 |

+

- lr_scheduler_type: linear

|

| 45 |

+

- training_steps: 1000

|

| 46 |

+

|

| 47 |

+

### Framework versions

|

| 48 |

+

|

| 49 |

+

- Transformers 4.44.0

|

| 50 |

+

- Pytorch 2.4.0

|

| 51 |

+

- Datasets 3.0.0

|

| 52 |

+

- Tokenizers 0.19.1

|